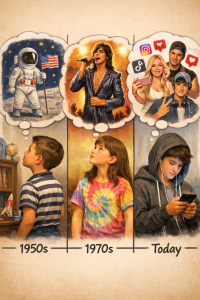

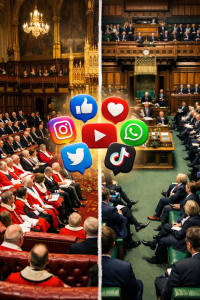

Technology has reached a stage where it can fabricate images, videos, and audio so convincingly that distinguishing reality from fiction is increasingly difficult. Deepfakes, digitally manipulated content created using artificial intelligence, are no longer the stuff of science fiction. They are real, accessible, and can be used to impersonate anyone.

The Warning Sign

Detecting deepfakes often requires careful attention. Subtle inconsistencies in speech patterns, slight facial glitches, or unnatural movements are common indicators. Unusual requests via video or voice, especially when they deviate from normal communication patterns, should raise immediate suspicion. Even if something looks authentic at first glance, your instincts may pick up on the small details that do not feel right.

The Risk

Deepfakes present multiple dangers. Individuals can be targeted for financial fraud or blackmail. Public figures may suffer reputational damage or false accusations. On a wider scale, misinformation can be spread rapidly, influencing opinions and decisions based on entirely fabricated evidence. The risks are not hypothetical. They are happening now and affect ordinary people as well as organisations.

What Individuals Can Do

- Verify through secondary channels: Always confirm identity via email, phone, or in-person communication before acting on unexpected requests.

- Scrutinise content: Look for glitches, mismatched lighting, unnatural movements, or inconsistencies in audio.

- Question unusual requests: A sudden demand for money, sensitive information, or unusual instructions should always trigger caution.

- Educate yourself: Stay informed about the latest AI tools and manipulation techniques so you can recognise red flags quickly.

The Ides Moment

Deepfakes are most dangerous when they look real but feel slightly off. Like the Ides of March, this technology serves as a warning that appearances can be deceptive. Awareness and vigilance are essential. Trust your instincts, check before you act, and never assume that what you see or hear is automatically true.

References

- Chesney, R., & Citron, D. (2019). Deep Fakes: A Looming Challenge for Privacy, Democracy, and National Security. California Law Review.

- Kietzmann, J., & Pitt, L. (2020). Deepfakes: Trick or Treat?. Business Horizons.

- Westerlund, M. (2019). The Emergence of Deepfake Technology: A Review. Technology Innovation Management Review.